Why the DRAM Structural Floor in the AI Era Makes This Time Different

Why AI demand adds a structural floor that transforms the typical memory cycle into a strong demand signal that is here to stay.

Last week on this newsletter, we discussed how TurboQuant works and how it signals another step along the path of algorithmic optimizations that is decoupled from the need for memory in AI systems. In the latest episode of Semi Doped, Austin (of ChipStrat) and I wondered why memory investors are the most skittish of the lot when it comes to innovation. It turns out there is a good reason: repeated boom-bust cycles - some of which we will visit in this post.

Still, worries of TurboQuant wrecking the memory industry have not abated. To the contrary, a new fear has been unlocked: the peak of the “hog cycle” often seen by seasoned investors as a strong signal of a memory peak, and impending doom.

The counterargument is that “this time is different,” driven primarily by the insatiable demand for memory in AI deployments, and the token explosion in the era of agentic AI that is already here. The real question is whether the demand is high enough to serve as a fallback when the consumer segment finally “says no” to the extraordinary price hikes and cancels deployment of low/mid tier devices, and/or downgrades product specs to accommodate what memory is reasonably available.

In this article, we will investigate these arguments in greater detail, make reasonable guesses and inferences where possible, and attempt to have a balanced, realistic stance based on the current state of the industry.

Broadly, we will follow this line of inquiry:

The classic memory hog cycle isn’t dead — but AI has added a multi-layered structural floor that mutes the traditional ‘PC/smartphone makers say no’ warning signal and turns the 2026 pullback into a buying opportunity rather than the start of another 2019/2022-style bust.

Here is what we cover:

Hog Cycles as a Leading Memory Indicator

2026: PC/Smartphone Industry Says ‘No’

“This Time is Different” – But is it?

🔒Understanding the New AI Realities

🔒Memory as the Bottleneck

🔒Revisiting the Thesis and Risks

If you are not a paid subscriber, you can purchase just this article using the button below. You can find the whole catalog of articles for purchase at this link.

Note: This article does not constitute investment advice. Do your own research.

Hog Cycles as a Leading Memory Indicator

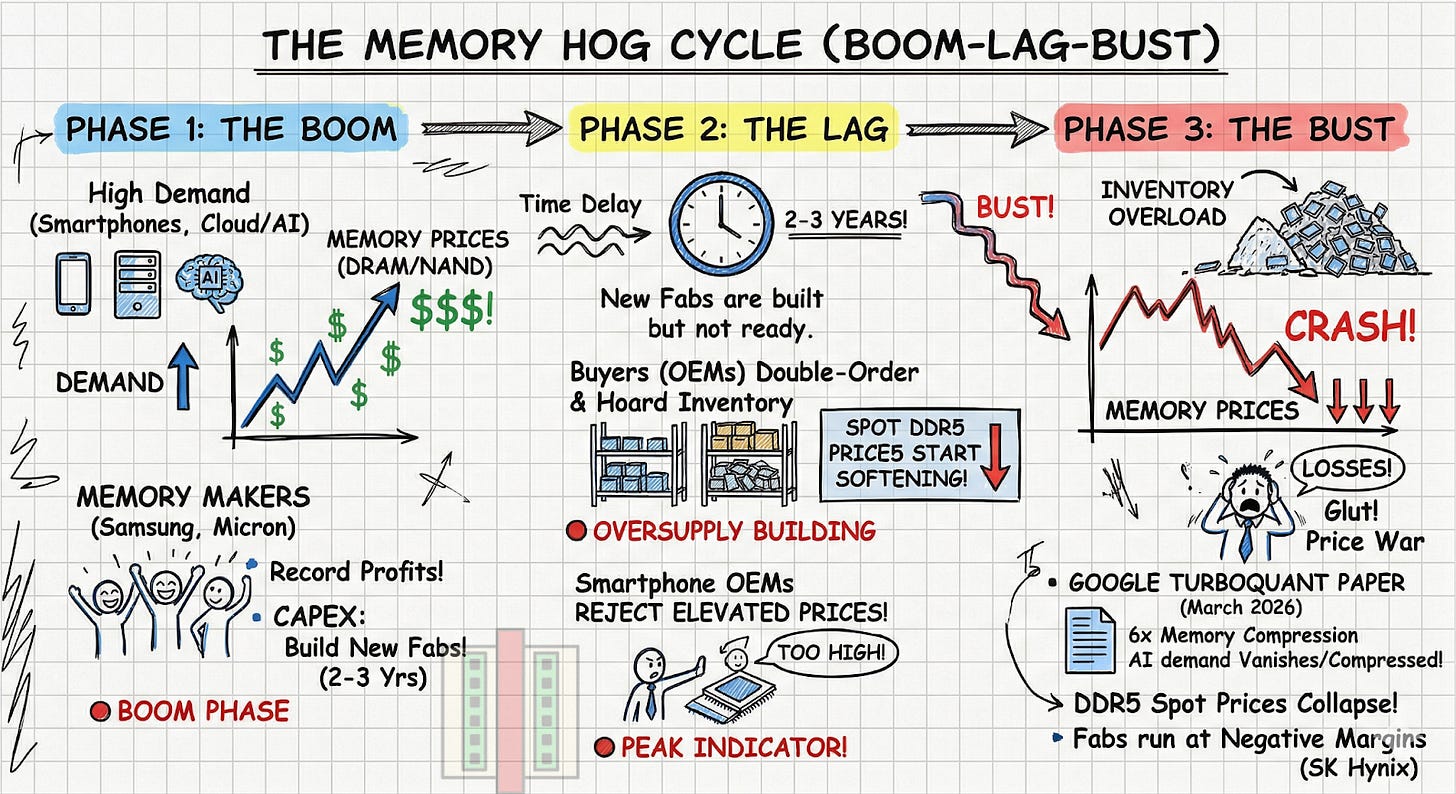

The classical hog cycle works like this: High pork prices lead farmers to breed more pigs. By the time the pigs mature, the market is flooded, prices crash, farmers stop breeding, and a shortage ensues and causes prices to spike again.

This applies directly to memory. A new technology (smartphones, cloud computing, AI) drives massive memory demand and prices soar. Memory makers enjoy record margins and heavily invest in expanding fab capacity. It takes years for that new capacity to come online. During this time, buyers double-order to secure supply, artificially inflating demand. The new fabs finally start producing exactly when downstream demand begins to cool. Supply floods the market, inventory piles up, and prices crash below the cost of production.

This cycle has specifically played out at-least twice in the last decade.

2019 Cloud Burst: Datacenter boom for cloud computing and crypto mining lead to excess inventory when everybody suddenly stopped buying. DRAM prices plummeted by 50%.

2022 Covid Hangover: Covid-era shutdowns and the spike in PC/phone sales for remote work left OEMs with excess inventory that suddenly had no demand. The carnage was real: DRAM spot prices dropped >50%, server DRAM revenues collapsed, memory stocks fell 30-50%, and NAND sold for less than it took to make.

2026: PC/Smartphone Industry Says ‘No’

It seems like the memory downturn in the past week is one for the history books, largely driven by fears around TurboQuant, and more importantly another catalyst worth discussing: the PC/smartphone industry. Shares of Micron, SanDisk, SK Hynix, and Samsung are all down 20-30% from their recent peak. I’m guessing we will talk about this for years to come.

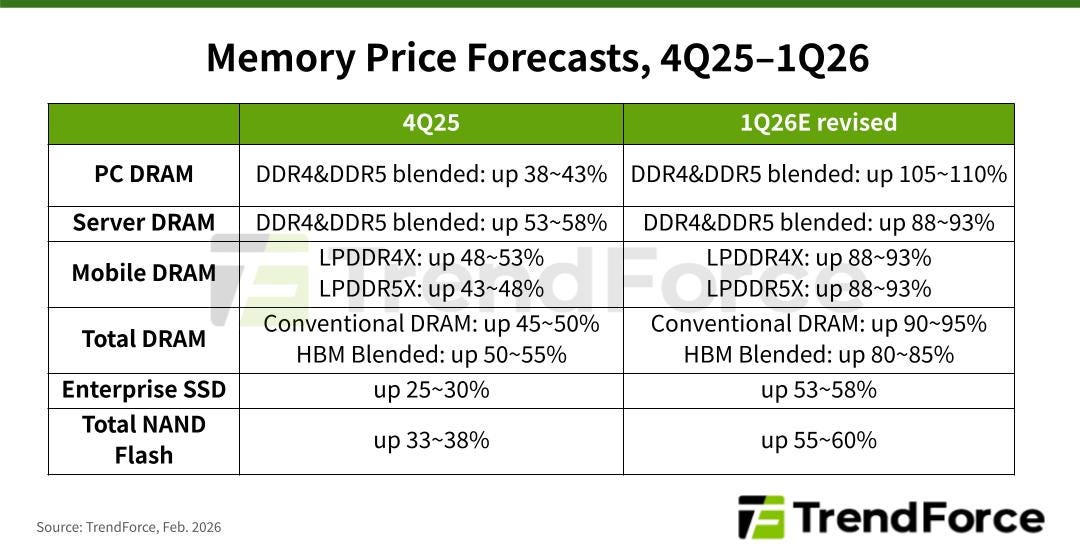

The PC/smartphone industry is the literal whale of the memory market consuming massive amounts of DDR, LPDDR and NAND flash storage. They have largely been squeezed out of the memory supply chain of late due to large spikes in DRAM and NAND pricing primarily driven by shortages due to the huge demand in AI accelerators, which consume nearly 70% of global high-end DRAM production. The recent TrendForce memory forecast below shows why pricing has reached a boiling point.

The prices for nearly every type of memory and storage has nearly doubled in a single quarter, which means that memory alone is estimated to occupy nearly 25-30% of a PC/Smartphone bill of materials (BoM). Two other factors further compound problems:

The long term agreements for memory supply entered after the Covid era bust are coming to an end. Consumer OEMs are being forced into an open market where there is virtually no silicon left to purchase.

The timing is particularly devastating for the PC industry, as this hyper-inflationary spike collides directly with the Microsoft Windows 10 end-of-life hardware refresh cycle.

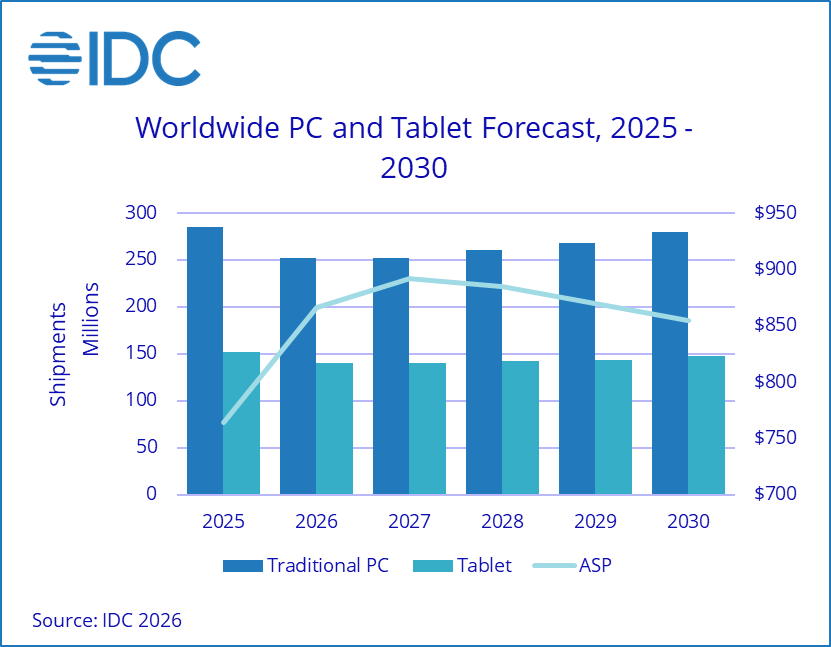

Dan Nystedt on X explains how the PC/smartphone industry has now drawn a line in the sand by planning “fewer (or zero) mid-to-low-end handsets in 2026.” – a sign often viewed by seasoned memory investors as a time to sell. IDC reports that global PC shipments are expected to drop by 11.3% in 2026, a revision that calls out a much larger drop than their 2.4% estimate a quarter ago.

Conventional wisdom and history lessons in the memory market dictate that the memory market has peaked. But, bulls argue the insatiable AI demand makes this time different.

“This Time is Different” – But is it?

The simple bull argument for the market is that the extraordinary demand for AI will soak up any short term demand decline by the PC/smartphone industry. That is, AI is a “fallback” when consumer demand drops and memory prices stay unaffected. But how valid is this?

This argument holds for now because memory makers are happy to divert production lines towards HBM and server-grade DRAM memory as Micron did by dropping the Crucial line of consumer-grade memory. This worked out well because Micron’s last earnings call guided for an 81% gross margin, which would exceed even Nvidia’s if they deliver. Their non-HBM product lines are also more profitable now, thanks to inflated spot market pricing. Spot pricing is a double-edged sword because in a deflationary market, the same product lines will drag down revenue.

Interestingly, history shows that there has almost always been a series of overlapping technology S-curves that acted as “fallback” and delayed (not avoided) the eventual memory market destruction.

Here are some examples.

2015-2016: Smartphone Maturation + Cloud Buildout

The Softening: In early 2015, the global smartphone adoption curve began to flatten and the upgrade cycle started stretching from 18 months to 24+ months. PC sales were also in a secular decline. With softening demand, smartphone OEMs started aggressively pushing back on memory contract prices.

The Fallback: The early cloud build-out meant that AWS, Azure, and Google Cloud were beginning to scale their massive data centers. This created a new, hungry sink for server DRAM and NAND SSDs, absorbing wafers that would have otherwise flooded the mobile and PC markets.

The Crash: The crash came around early 2016 because cloud buildouts could not effectively absorb the PC/smartphone softening, leading to an inventory glut and prices plummeting.

2018-2019: Smartphone Decline + Crypto Mania

The Softening: Smartphone sales went from slowing to actually contracting globally. At the same time, the memory prices from the 2017 cloud supercycle had gotten so high that smartphone OEMs actively decided to cap the amount of DRAM in their flagship phones to protect their profit margins.

The Fallback: During the crypto mining boom, hyperscalers were buying server memory at a frantic pace, terrified of supply shortages. The 2017–2018 crypto bull run created an insatiable demand for high-end graphics cards, sucking up massive amounts of GDDR and standard PC DRAM.

The Crash: By 2019, cloud hyperscalers realized they had massively over-ordered DRAM, and switched to inventory digestion mode. Then came the crypto winter. Bitcoin crashed when people realized it was unprofitable to mine crypto and the demand for graphics cards and GDDR evaporated overnight.

2022-2023: Post-Covid Demand Drop

The Softening: By early 2022, everyone who needed a laptop or phone for remote work already had one. As inflation spiked, consumer spending on electronics completely froze. PC and smartphone OEMs hit the brakes hard, cancelling memory orders to burn through their existing warehouses of chips.

The Fallback: There was none. Cloud hyperscalers were not on a spending spree for memory, and as a result, nothing softened the blow.

The Crash: Because memory makers had aggressively built new fabs during the 2021 boom, the simultaneous collapse of both the consumer main market and the enterprise fallback created the worst inventory glut in the history of the memory industry, forcing memory makers into deep operating losses.

Memory cycles have mostly had an infrastructure buildout softening the blow when consumer demand drops. The question now is: will this happen again with the AI infrastructure buildout?

After the paywall:

Understanding the New AI Realities: The four structural differences—memory density, wafer efficiency, allocation shift, and contract stickiness—that make current AI infrastructure demand fundamentally unique.

Memory as the Bottleneck: Why memory, particularly for long context windows and inference, has replaced compute as the primary bottleneck in AI systems.

Revisiting the Thesis and Risks: Evaluates the AI buildout as a structural floor for demand, and provides perspectives on the 2026 memory pullback and risks.