Tokenmaxxing and the Token Value Chain

Who profits from tokenmaxxing and who gets stuck with the bill.

This piece is different from the usual hardware deep-dives I write, but I wanted to express some thoughts on Tokenmaxxing - Silicon Valley’s newest trend, because token usage is fast becoming a proxy for productivity, much like lines-of-code in the dot-com coding era.

Meta’s now-defunct “Claudeonomics” leaderboard ranked employees by token usage, while celebrating power users with titles like “Token Legend.” In a 30 day window, company-wide consumption topped 60 trillion tokens, with the top contender alone burning 281 billion. CTO Andrew Bosworth bragged publicly that his best engineer was spending the equivalent of his salary in tokens and getting up to 10x more productivity out of it. How productivity was measured remains unknown. On the All-In podcast at GTC, Jensen said that if his $500K engineer was not consuming at least $250K worth of tokens, he would be “deeply alarmed.” Silicon Valley engineers tell me their companies are pushing the same tokenmaxxing message internally. But if the number of tokens used becomes the target, it stops being a useful metric (Goodhart’s law).

Here’s what you have to pay attention to: the tokenmaxxing message applies to those who are able to establish a linear (preferably exponential) relationship between outcome and token usage. Tokenmaxxing can be rational, but not always.

Foundation model providers like Anthropic and OpenAI have a clean, understandable revenue metric (pay-per-token). Meta has massive internal workloads like serving ads that require internal token consumption. Garry Tan wants software startups built faster at YC. Nvidia and AMD simply want to sell more hardware.

In this post, we will examine the promise of the Token Value Chain, how reality is more nuanced, who stands to benefit, and who stands to lose. More importantly, we will discuss what a “smart token” strategy should look like for companies looking to go AI-native, and when tokenmaxxing is a viable strategy.

If you are not a paid subscriber, you can purchase just this article using the button below. You can find the whole catalog of articles for purchase at this link.

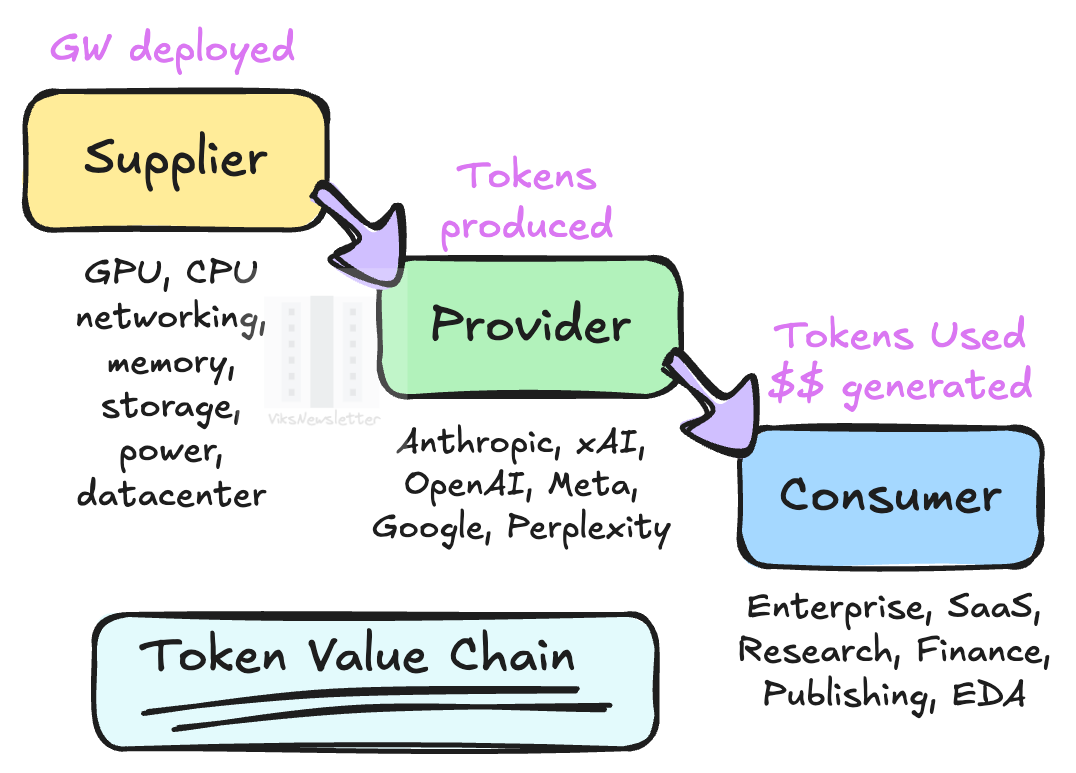

The Token Value Chain

The token value chain is simple: massive amounts of wealth injected into the top of the chip manufacturing supply chain will flow through token providers, and ultimately manifest as profit for enterprises adopting AI through dramatic increases in productivity (products shipped, revenue earned, etc.).

The Token Value Chain has three levels:

Token Supplier: Nvidia and AMD sit at the top of this tier selling GPUs, but the real supplier base is much wider: CPUs, memory/storage, interconnects (copper/optics), PCBs, and power/cooling. Neoclouds like CoreWeave and Nebius belong here too, renting out GPU capacity by the hour. Every single token burned is a pick-and-shovel sale for this tier. Revenue scales with volume, supply chain and power bottlenecks not included.

Token Provider: Anthropic and OpenAI do most of the work, with Google, xAI and a handful of others in the mix. These are the companies that take raw compute from the Supplier tier and turn it into tokens sold on a pay-per-use basis. Every token they produce is money, which is why Anthropic’s revenue run rate has gone from $1 billion to $30 billion in about 15 months. Revenue here also scales with volume. The more their customers tokenmaxx, the better the numbers look.

Token Consumer: Enterprises, startups, and increasingly every Fortune 500 company that has been told to go AI-native. It is also the only tier in the value chain whose revenue is not tied to token volume – at least not directly, and not always.

The promise of AI is that massive amounts of investments into the Supplier tier translates into more tokens generated by Providers, which ultimately leads to massive profitability at the Consumer level, which will then recursively feed the massive infrastructure spend at the Supplier tier. If you look closer, the Provider layer revenue is tied to token volume, which translates to volume in Giga-Watts deployed at the Supplier tier. But the Consumer pays the ultimate token price.

Gavin Baker puts this eloquently in an interview at Nvidia GTC.

If you are not a token producer, your fate as a business is going to be entirely determined by how efficiently and effectively you consume tokens as an organization.

In other words, while the cost per token used is pre-determined, the revenue generated per token used is anybody’s guess. While both the Supplier and Provider win on volume, the Consumer has to win on outcome. If you view tokens as the raw materials required to produce a product, then there are two approaches to run the business:

Use as little raw material as possible to produce products, and increase margins. A token-constrained consumer would use an intentional token deployment strategy and ride the efficient frontier of intelligence per token used. A number of “smart token strategies” can be deployed to get the “most bang for token buck.”

Use as much raw material as possible to generate as many products as possible and corner the market. A token-rich consumer would deploy an infinite number of tokens of a leading intelligence model without regard to token cost, to produce remarkable results; just like infinite monkeys with a typewriter can produce Shakespeare. In theory, infinite intelligence (and money) would automatically lock out competitors, leaving token-constrained entities unable to compete. This is tokenmaxxing as a strategy.

We’ll expand on these two ideas after the paywall.