The AI Datacenter CPU Yellow Pages

Grace, Vera, Venice, Turin, Diamond/Granite Rapids, Clearwater/Sierra Forest, Graviton, Cobalt, Phoenix, AmpereOne.

In last week’s article, we discussed why CPUs are critical in the era of agentic AI. The “operational burden” of the orchestrating AI tasks falls on the CPU, which affects GPU utilization rates and eventually TCO. Check out the whole post.

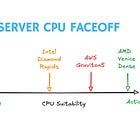

In the paywalled portion of the above post, we compared 16 different server CPUs and scored them across nine different metrics to assess their suitability towards reasoning or action-oriented workflows. In this post, we will show the work behind the CPU scores, discuss each rating, and provide a brief use-case recommendation.

In an ideal world, I would imagine the scoring to be based on actual workloads — something along the lines of InferenceX/ClusterMAX by SemiAnalysis. I do not have access to hardware nor the skills to actually do this. So we will compare known/reported features.

Claude Opus 4.6 was used to help collate and organize the results. A lot of the information is parsed from SemiAnalysis’ extensive reporting on the landscape of datacenter CPUs, with added information to compare CPUs across a wide range of features. AI has been immensely useful in this research and helped speed up analysis that would have taken far too long for one person to do manually. I have done my best to verify the accuracy of metrics and the ratings. Please contact me if you find errors.

Below is a list of CPUs that are covered in this article. You can access each of them out of order using the sidebar on Substack. I might continue to update/add to this server CPU database which is why this will remain purely on Substack for paid subscribers. No downloadable assets will be available.

NVIDIA (Grace, Vera) — For free subscribers

AMD (Venice Dense, Venice Classic, Turin Dense)

Intel (Diamond Rapids, Granite Rapids, Clearwater Forest, Sierra Forest)

ARM Hyperscaler (AWS Graviton5, Microsoft Cobalt 200)

Other ARM (ARM Phoenix, AmpereOne M)

Excluded: Google Axion (low core counts), Huawei Kunpeng 950 (too little known)

Scoring Framework

Here is a quick recap of the scoring framework. Each CPU is scored on 9 metrics using a 1-5 point scale, where higher is better. The points are later weighted depending on whether the feature is more suitable for an agentic or action-oriented workload.

Per-core performance: Higher clock speeds and/or higher IPC is good for both reasoning and action workloads. Fast CPUs finish tasks quickly, and watches GPU tokens closely to determine next steps.

Core count: Higher is better for action workloads, not important for reasoning workloads. More cores = more agents. Multi-threading helps a lot.

CPU-xPU interconnect bandwidth: Higher is better for long-context reasoning workloads so that large batches of tokens can be exchanged quickly; not important for action workloads that only use the GPU for short durations.

Memory BW and capacity: More is better for both reasoning and action workloads. Reasoning workloads will evict to lower memory tiers; action workloads need working memory for large number of agents.

Cache size: More is better for action workloads so that small data does not have to leave the chip. This means lower latency. Not important for reasoning workloads because of large memory traffic that won’t fit in cache anyway.

Performance/watt: More is better for action workloads especially if CPU counts in the datacenter grow; unimportant for reasoning workloads because power is dominated by GPU use.

PCIe speeds: More is better for action workloads because lots of external accesses are involved, unimportant for reasoning workloads where data exchange is mostly between CPU-xPU.

ISA: x86 better for action workloads only because of the maturity of the software ecosystem; either is okay for reasoning workloads where there are not a wide variety of tools being called. x86 = 5 points, Arm = 3 points, in the scoring system.

NUMA latency: Lower is better for action workloads because all agents will have similar memory access latency; unimportant for reasoning workloads that only use a few cores. Fewer chiplets in CPU design usually means lower NUMA latency.

Composite Scores:

Reasoning composite is weighted on: per-core performance, xPU interconnect bandwidth, and memory BW/capacity

Action composite is weighted on: core/thread count, cache, perf/watt, PCIe, ISA (x86 advantage), and NUMA

The composite scores are calculated based on the average scores of the metrics that matter to either workload. The score is then adjusted up or down based on market dynamics, historical trends and company specifics. This is admittedly not very scientific, but then neither is the real world when it comes to choosing a CPU.

NVIDIA

Grace

Reasoning: 3 | Action: 1 | Best Fit: Reasoning

Cores/Threads: 72 cores / 72 threads

Process: TSMC 4N | TDP: 200-250W (single die)

Socket: BGA (platform-locked)

Memory: LPDDR5X, 480 GB capacity, 512 GB/s bandwidth

I/O: PCIe5 | NVLink-C2C 900 GB/s bidirectional to attached GPUs

Status: Available since mid-2023 | Shipping in production systems

Access: Platform-locked — only available in NVIDIA superchip configurations

Roadmap: Grace → Vera (H2 2026)

Per-core perf (3/5): Neoverse V2 cores with 1MB L2. According to SemiAnalysis, Grace has a significant branch prediction bottleneck due to which AI workloads are currently being slowed by the Grace CPUs in GB200 and GB300. Hopper used x86 CPUs, and Vera uses custom Arm cores - suggesting that the “off-the-shelf” Neoverse cores used in Grace just didn’t work out.

Core count (1/5): 72 cores / 72 threads. No SMT. Lowest core count among the non-specialty CPUs. Insufficient for action workloads. A blog post by the NVIDIA and vLLM team suggests that the CPUs could not keep up with GPUs, and needed special optimizations to keep GPU utilization high.

xPU interconnect (4/5): NVLink-C2C at 900 GB/s bidirectional. Second-best GPU interconnect, behind Vera’s 1.8 TB/s. Coherent memory access allows GPU to access CPU memory at full bandwidth.

Mem BW & capacity (2/5): 480GB LPDDR5X at 512 GB/s. Decent bandwidth but limited capacity — approximately one-third of Vera’s 1.5 TB. LPDDR5X keeps non-GPU power down.

Cache size (2/5): 114MB L3 + 72MB L2 (1MB per core). Modest total of ~186MB.

Perf/watt (3/5): LPDDR5X helps with power efficiency, and the simpler Arm ISA leads to better efficiency too, but the performance itself is not top of the line either.

PCIe (2/5): PCIe5 with limited lane count — NVIDIA focused the IO budget on NVLink-C2C, not PCIe connectivity.

ISA (3/5): ARM. Same ecosystem lock-in as Vera — typically only available as part of NVIDIA’s superchip platforms (Grace Hopper, Grace Blackwell). Reports state that NVIDIA is deploying both Grace and Vera CPUs in standalone formats as part of their recent partnership with Meta.

NUMA (5/5): Single die with 6x7 mesh, 72 cores. Very clean, uniform memory access.

Recommendation: Grace is a reasoning CPU that’s being superseded by Vera. Its NVLink-C2C gives it a clear reasoning advantage over non-NVIDIA CPUs, but if true, the branch prediction bottleneck actively slows AI workloads. It is unsuitable for action workloads with only 72 threads and no SMT. Vera is a strict upgrade in every dimension.

Vera

Reasoning: 5 | Action: 2 | Best Fit: Reasoning

Cores/Threads: 88 cores / 176 threads (Spatial Multithreading)

Process: TSMC N3 | TDP: Not disclosed

Socket: CoWoS-R package (BGA, platform-locked)

Memory: 8x SOCAMM LPDDR5X, 1.5 TB capacity, 1.2 TB/s bandwidth

I/O: PCIe6 + CXL3 on separate IO chiplet | NVLink-C2C 1.8 TB/s bidirectional to Rubin GPUs

Status: H2 2026 (NVL72 platform)

Access: Platform-locked to Rubin NVL72 (72 GPUs + 36 Vera CPUs + 18 compute blades per rack) | Standalone Vera available through select partners

Roadmap: Grace → Vera → TBD

Per-core perf (5/5): Custom Olympus ARM core — NVIDIA’s own design, not off-the-shelf Neoverse V2 used in Grace. Supports SMT for 176 threads. 2MB L2 per core. NVIDIA claims 2x performance improvement over Grace. ARMv9.2 with SVE2 FP8 operations.

Core count (2/5): 88 cores / 176 threads. Deliberately modest — this CPU is designed to run a small number of tasks extremely fast, not hundreds of agents. 176 threads is not the best core-count for large-scale agent orchestration.

xPU interconnect (5/5): NVLink-C2C at 1.8 TB/s bidirectional — the highest CPU-to-GPU bandwidth of any CPU here. Coherent memory sharing lets Rubin GPUs read directly from CPU memory. This is the defining feature that makes Vera a reasoning CPU.

Mem BW & capacity (5/5): 1.5 TB of LPDDR5 across 8 SOCAMM modules at 1.2 TB/s bandwidth. Massive capacity for KV-cache expansion. LPDDR instead of DDR5 DIMMs keeps power low while maintaining bandwidth.

Cache size (3/5): 162MB L3 spread across 7x13 mesh, plus 2MB L2 per core (176MB total L2). Adequate for managing inference state and metadata but not exceptional for the core count.

Perf/watt (3/5): LPDDR5 helps with power but this isn’t an efficiency-first design. The GPU dominates the power budget anyway.

PCIe (4/5): PCIe6 with CXL3 on a separate IO chiplet. Available for networking and storage, but the primary GPU data path is NVLink-C2C, not PCIe. PCIe becomes the path to NVMe for KV-cache tier-3 offload.

ISA (3/5): ARM. Locks you into the NVIDIA ecosystem — Vera only ships as part of the Rubin platform (1 Vera CPU to 2 Rubin GPUs per superchip), although you apparently can buy Vera standalone. ARM ecosystem is adequate for reasoning workloads but less versatile for diverse tool execution.

NUMA (5/5): All 88 cores on a single reticle-sized compute die (3nm) with the full 7x13 mesh. Memory and IO are on separate chiplets (6 dies total on CoWoS-R), but all cores share one die. Single, clean NUMA domain for compute — no cross-die core-to-core penalties. Cleanest NUMA topology at this core count.

Recommendation: Vera is the gold standard for reasoning workloads. It scores 5 on the three metrics that matter most for reasoning (per-core perf, xPU interconnect, memory BW/capacity). Its entire design philosophy is: massive pipe to the GPU, fast cores, lots of memory, nothing else gets in the way. It scores poorly on action metrics because the core count is too low.