🍪 TWiC: MU HBM4, OCI MSA, Credo Optics, VCSEL Scale-Up, DGX GB300, NBIS $27B

Hot takes from the AI oven.

Way too much news this week due to simultaneous GTC and OFC events. We’ll only hit a few topics even though there is a lot more out there. We covered GTC already with both a deep dive and a podcast, so we won’t dwell on it today.

Deep dive: Beyond GTC: A Deep Dive into Compute, LPX, and the Untold Story of SpecDec

Semi Doped Podcast: Quick Takes: Nvidia GTC keynote

We genuinely feel this was one of our spontaneous and fun episodes on Semi Doped. Highly recommend you check it out. We have crossed 1,100 subs on YouTube and 7,500 podcast downloads already!

Now on to the news.

Micron supplying “fastest” HBM4 to Nvidia Vera Rubin

A SemiAnalysis report published earlier this year declared that Micron will be supplying zero HBM4 to Nvidia in the early Rubin ramp last month. But those have largely been quelled now with Jensen publicly touting their “fastest HBM4” delivered. Several folks pushed back on this at the time, and reiterated that selling MU 0.00%↑ on this rumor was a bad idea. They argued that even if Micron did not sell HBM to Nvidia, they are better off selling much wanted DRAM instead at elevated prices with DRAM being significantly easier to manufacture. There were also rumors earlier that the HBM4 base die being designed on a memory node (1-beta) somehow slowed down lane speeds compared to competition who used logic nodes in the base die. This was later confounded by rumors that Micron somehow had trouble measuring HBM performance.

Well, all that guessing seems questionable now. Micron posted great numbers last quarter, stock is up 62% YTD, and the line still goes out the door for memory while they supply HBM4 to Vera Rubin. I am still skeptical that Micron’s HBM is actually the “fastest,” but it seems fast enough that Jensen is willing to take a picture with Sanjoy Mehrotra, CEO of Micron. Also, Jensen, some people would have wanted that memory before you signed on the wafer. Think about where you go about signing stuff; just sayin’.

(via Micron)

Optical scale-up goes official with OCI MSA

A bunch of important companies got together and decided to put some method to the madness in optical networking. The Optical Compute Interconnect Multi-Source Agreement (OCI MSA) is a way to make sure everyone is talking the same language when we go to optical scale up. This allows infrastructure buildouts to use components from different vendors who all comply to the open standard, thus fostering a robust ecosystem of optical networking and avoiding getting everyone locked in to how any one company does things. This is an important step forward, because it makes optical scale-up official, and now everyone is building towards it. In a similar vein, Lightmatter announced a new collaborative initiative within the Open Compute Project (OCP) to create open specifications for interoperable CPO.

(via oci-msa.org)

Credo: 400G/800G/1.6T Optical DSPs, ZeroFlap 800G transceivers

Credo announced their Cardinal 1.6T optical DSP, and Robin DSPs for 400G and 800G, in addition to zero-flap 800G transceivers, showing that they do recognize that optics is going to be around. But so is copper. This puts Credo in a nice position to supply to both markets should CPO adoption get pushed out. Also, microLED is not viewed as useful by the industry, but regardless of however that turns out, it’s interesting that Credo has a “better than copper, not as good as optics” technology option that might actually prove useful. Or not. Time will tell.

I am neither a copper or optics bull (I’m both in the short to medium term), and investors need to think for themselves and stop shorting CRDO 0.00%↑ every time there is some uptick in optics news. Jensen was clear at GTC that we are going to use both copper and optics in the near term. Vera-Rubin NVL576 will use NVL72 or NVL144 racks hooked up to each other via optics, but each rack will still use copper. Also, recognize those infamous purple cables in Meta’s MTIA 400?

(via businesswire: Cardinal 1.6T DSP, Robin 400/800G DSP, 800G-ZF)

Lumentum: 1060 nm VCSEL for slow-but-wide optical scale-up

Vertical Cavity Surface Emitting Lasers (VCSELs) are mature technology, but are not great for ultra high speed optical communication links because of their nonlinear behavior, wide spectral line width, dispersion into multiple modes, and limitations on modulation bandwidth. But you can run them at slower speeds, and then use a VCSEL array to run multiple lanes in parallel — like the HBM of optics, so to speak.

VCSELs have another major advantage in today’s day and age. From Lumentum:

As a VCSEL-based scale-up solution, the platform also provides an independent supply chain alternative to silicon photonics and InP laser-based architectures.

InP alternative you say? 👀 VCSELs typically use Gallium Arsenide (GaAs) as the III-V material for laser light generation, and not InP which is in extremely short supply. Just imagine a scenario where we could do optical scale-up with GaAs VCSELs; where does that put the highly sought after InP supply chain?

Luckily for LITE 0.00%↑ bulls , Lumentum is also the leader in InP EML lasers which is currently in high demand, and their CW lasers for CPO has some competition from Coherent, Applied Optoelectronics and others. If they corner the market with 1060 nm VCSELs for optical scale-up, now wouldn’t that be a Lumentum bull case? Oh, and if you’re wondering about the strange choice of 1060 nm wavelength, here is what Lumentum has to say:

Compared to conventional 850nm datacom VCSELs, 1060nm devices deliver improved speed capability, superior high-temperature performance, and exceptional long-term reliability.

We’re still looking at demo hardware from OFC 2026, but this is a tech to monitor closely. If you want to learn more about the “slow-but-wide” approach to optics, check out Lightmatter’s blog post.

(via Lumentum)

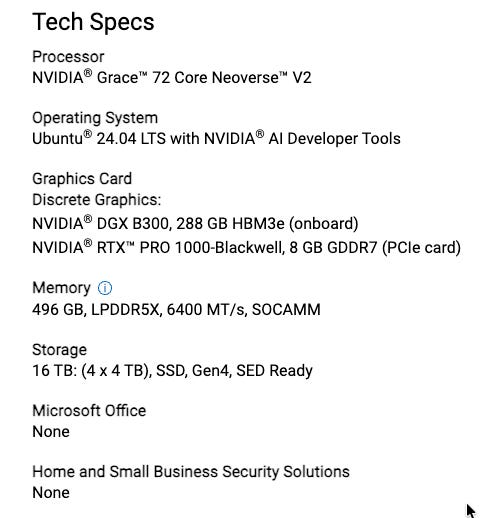

NVIDIA DGX Station GB300

At GTC, Jensen mentioned how each company should have their agentic AI strategy, or risk falling behind. One concern for many sensitive end markets is the company information staying on premises — think healthcare, insurance companies, law firms, and even engineering firms.

The DGX GB300 is the enterprise solution that you need, because it is explicitly designed for the full develop-to-deploy pipeline, not just R&D. Enterprises deploy it on-premises in their own data centers or colocation facilities so they can run private, secure, high-performance inference without sending sensitive data to public clouds. That is “local inferencing” in the enterprise sense — your data and models stay inside your infrastructure, with full control and predictable cost/latency.

This machine is a beast; just look at the specs below. My guess is the cost is anywhere between $30,000-$50,000, because the GB10 version is ~$5,000. Even if my estimates are off by 2x, this machine is a no brainer to deploy within a company so that engineers can use all the tokens that their on-prem hardware supports. This kind of hardware makes it easy to treat token costs as depreciating CapEx, rather than buying tokens from cloud companies and treating it as OpEx.

Aside: if you’re a $500K engineer reading this, make sure you cost your company half your salary in AI tokens. Tell them Jensen said so in your annual review, and explain to your manager how AI actually makes things cheaper. Post back in the comments with your annual raise.

Aside’s aside: Just ask the manager to buy you a DGX Station GB300.

(via Nvidia)

Nebius: $27B AI Infrastructure Agreement with Meta

Massive five-year deal, and it helps to understand how it works.

$12 billion committed/dedicated capacity: Nebius will build and reserve AI compute clusters specifically for Meta across multiple locations → first large-scale deployments of NVIDIA’s Vera Rubin platform. Deliveries start early 2027.

Up to $15 billion additional: Meta has committed to buy any remaining available capacity in certain upcoming Nebius clusters (after Nebius sells to third-party customers first).

For Meta, this means that they have secured compute capacity five years in advance. This also shows that AI infrastructure buildouts will be a hybrid of self-building and outsourcing. Also, the infrastructure spending is showing no signs of slowing down.

For Nebius, this deal is in the order of their market cap of ~$30B. The locked in utilization rate from a customer the size of Meta means that their buildout will be easier to finance. But the risks here lie in the execution timelines for datacenters and availability of power to keep them running.

(via Nebius)

Have a great weekend!

I’m curious why this is: “Also, microLED is not viewed as useful by the industry”

I thought part of the reason CRDO had such a tough week was that MSFT and Mediatek are doing a separate microLED product. That made me think people do see a use for it, but it’s going to be a multi-player market