🍪 TWiC: Samsung SiPho, Mobile Demand, Nvidia+Marvell, Intel Fab, Claude Leak, ++

This Week in Chips: Freshy chip news from the semi galaxy, and neighboring dwarf stars

The semi world is still recovering from the “TurboQuant setback” even while memory demand looks stronger than ever. This week’s deep dive covers the exact reasons why “this time its different.” Several analysts including FundaAI released reports this week with similar conclusions — that the 2026 memory pull back is more of a buying opportunity and that memory fundamentals look strong.

Here’s the post if you haven’t gotten to it yet.

In last week’s Semi Doped podcast, we covered TurboQuant in some detail, and argued whether Arm’s AGI CPU will actually displace x86 in the long run.

Now, on to the news.

Samsung Enters SiPho, Wants to Connect HBM with Light

Samsung unveiled its SiPho Foundry platform at OFC 2026 that is production-ready, with a PDK, and ready for manufacturing on a 300 mm wafer process. The main pitch here is Samsung’s vertically integrated memory capabilities with HBM and silicon photonics under one roof, a turnkey bundle that TSMC cannot match. Early results are expected in the 2028-29 time frame, and the industry places Samsung roughly 3 years behind TSMC, primarily due to ecosystem, design enablement, and customer trust. Samsung’s Semiconductor division has already designated its silicon photonics processes as “I-CubeSo” and “I-CubeEo” (try saying that 3 times in a row) and is allegedly working closely with Broadcom and Marvell.

Low/mid tier mobile is driving demand destruction; PC demand down

MediaTek and Qualcomm are both cutting 4nm chip shipments by 15-20 million units combined because memory has gotten so expensive that low-to-mid-tier smartphones make no sense to build because their BOM is so high. Whether premium tier phones are insulated is questionable, especially when Apple is supposedly buying up all available DRAM at high prices to starve out Android competitors like Samsung (ironic since Samsung is also Apple's largest DRAM supplier).

Last year, more than 360 million smartphones shipped below $150, representing a substantial share of global volumes, with that proportion rising in key emerging markets like Africa and India. The push for greater economies of scale will drive mergers among the smaller players. Omdia cites Realme reintegrating under OPPO's umbrella as early signs of consolidation as vendors seek greater scale to manage rising costs. The consensus is that this is a structural reallocation of memory capacity toward AI/HBM at the expense of consumer TAM.

Nvidia joins forces with Marvell via NVLink Fusion

Nvidia and Marvell announced a partnership that allows Marvell’s custom ASIC silicon to run on Nvidia infrastructure via NVLink fusion. Market sentiment is overwhelmingly positive on this news, and Marvell shares surged 13%. The deal is viewed as a defensive masterstroke by Jensen that signals that if Nvidia can’t beat the custom chip movement, they can at least own the fabric ASICs run on. Clear winner is Marvell here because they get the $2B investment cookie that Nvidia has been handing out to everybody (LITE, COHR, NBIS…) and also “preferred partner” status with Nvidia. This puts Broadcom in a more challenging position as the primary backer of UA-Link standard over NVLink fusion.

(via Nvidia News)

Intel buys back 49% share of its Fab 34 Ireland fab

Intel said it would spend $14.2 billion (cash+debt) to buy back the 49% stake it sold to Apollo Global in its Ireland manufacturing facility. The fab produces leading-edge nodes like Intel 3 and 4, including its Core Ultra and Xeon 6 processors. The price paid is $3B more than they sold it for two years ago, and the market is reading this as Intel’s conviction in their foundry strategy by putting real money behind the chip manufacturing. The sentiment is strongly positive and is interpreted as a sign of confidence that Intel’s foundry turnaround is actually happening. Investors will closely scrutinize whether the strategic benefits are strong enough to justify the added cost and financing burden.

(via Intel)

OpenAI closes record breaking $122B funding round

Total valuation is $852B. Seriously, that is a lot of money. OpenAI is pulling $2B/mo in revenue, and still continuously losing money. Only profitable in 2030. 👀

(via The Guardian)

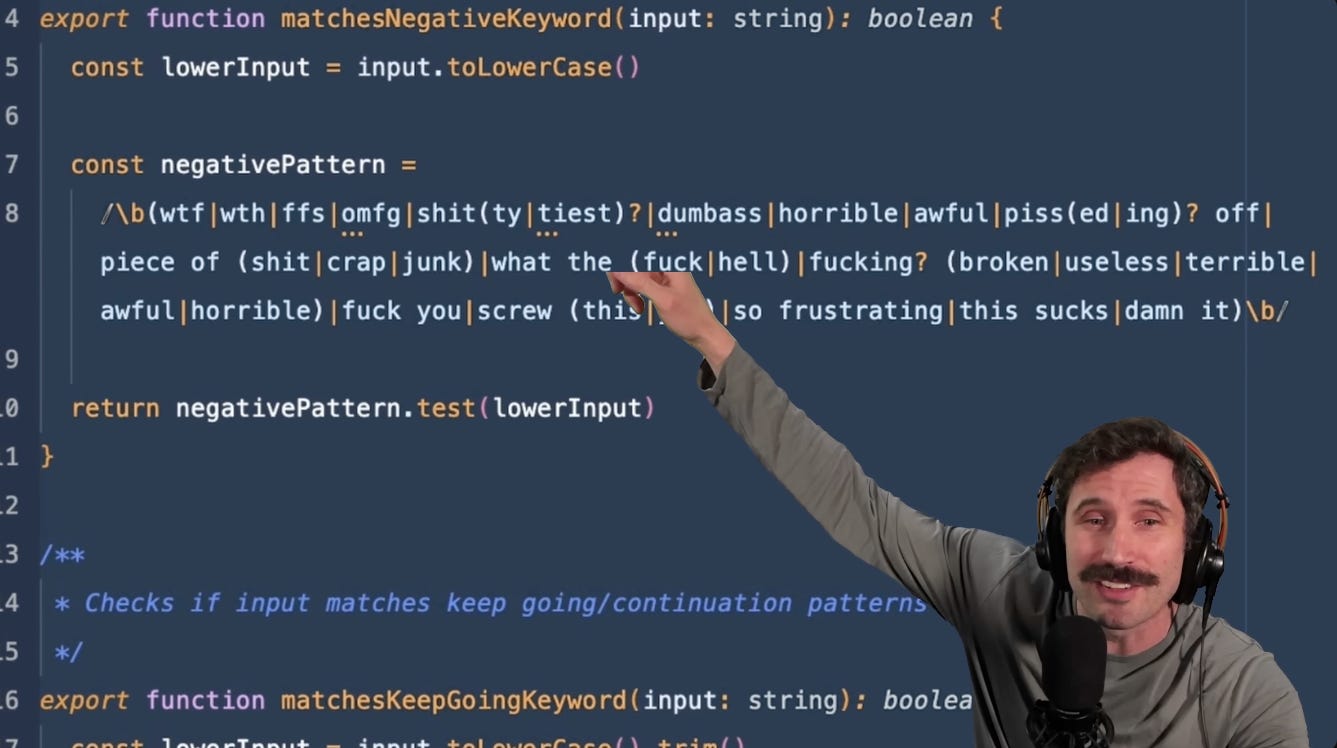

Anthropic’s Claude has a wardrobe malfunction

Claude’s source code leaked via npm repository, and yeah, we can see everything underneath 🙈, including a digital pet and an always-on agent. The funniest coverage on this is from ThePrimeagen on YouTube. Seriously watch it lol.

(via literally everywhere)

Your latest co-worker is now an AI employee

This is a $2,000/mo AI from a company called Kuse AI that is a virtual AI employee who never sleeps, eats, or goes to happy hour. There are 2,000+ companies who have signed up for the wait list. If companies need to pay 3-5x more for a human who demands work-life balance, an OpenClaw agent that the company calls Junior seems to be a better bet. All well and good, but you can’t choke an AI neck when things go wrong.

(via Bloomberg)

Atlas Studio: A Foundation Model for Electromagnetics

This is a physics foundational model that is useful for a lot of RF design work that would take too long with a simulator. Not Boring has a long piece on it. Long time readers would remember a piece I wrote about crazy RF designs from Prof. Sengupta’s group (Princeton) last year. Well, Prof. Sengupta is now pushing back on the ‘radicalness’ of the foundation EM model by comparing it to someone claiming that they invented the transistor.

(h/t @SinghJyotirmai, via Arena Physica)

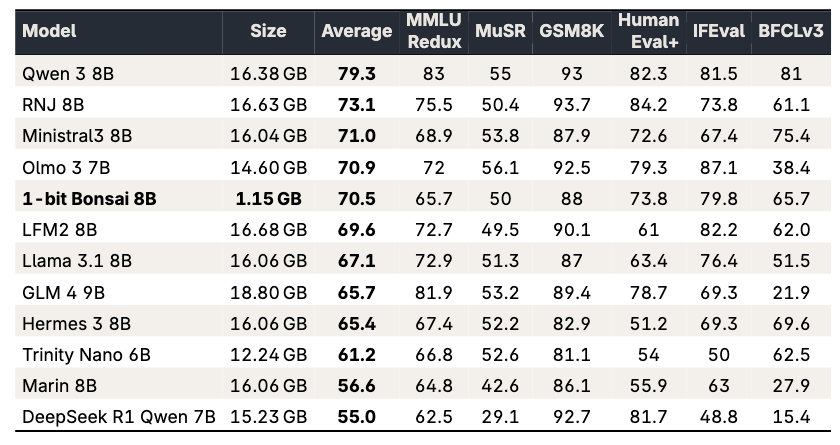

PrismML: 1-bit Bonsai 8B model fits into 1.15GB

With the TurboQuant meltdown in place, I wonder if we should worry about dramatically compressed models like PrismML. If this delivers any level of useful intelligence, it could unlock a whole new world of edge inference. Its open-source and extremely light weight for an 8B parameter model. I think I’ll run it on my Mac M3 pro this weekend and see how I like it. I already tried it on my iPhone. It could also serve as a local fallback model for OpenClaw.

(via PrismML)

Have a great weekend!