XPO vs CPO: The Trade-offs Between Speed, Power, and Modularity in Next-Gen AI Networking

A technical walkthrough of the new XPO form factor, and the scale-out networking economics that shape the CPO vs XPO decision

As GPU counts in AI clusters grow, scale-out networking choices are becoming as critical as the compute itself. Switches like Broadcom’s Tomahawk 6 (TH6), carry 102.4T of aggregate bandwidth, while TH7 is expected to deliver 204.8T in 2027. This rapid scaling raises the ultimate question for the next generation of data centers: which networking technologies are best suited to handle this bandwidth without breaking the power budget?

The networking industry has been making rapid strides towards Co-Packaged Optics (CPO) for scale-out networking with external lasers, replaceable 3D optical engines, and per-lane data rates of 200G or more. Traditional arguments against CPO such as laser failures and serviceability are no longer show-stoppers, as explained later in this post. Still, CPO is an architectural reset that upends the traditional pluggable optics market and consolidates various networking components into the hands of a few suppliers like Nvidia, Broadcom, and Marvell.

This paradigm shift puts immense pressure on pluggable transceiver manufacturers like Eoptolink, and system integrators like Arista Networks. As optical functions shift to advanced silicon packaging using TSMC’s CoWoS and COUPE, the value chain moves away from traditional PCB assembly and rack integration. In a defensive play to protect their margins and market share, these players are responding with a new format of their own.

At OFC 2026, Arista Networks introduced eXtra-dense Pluggable Optics (XPO) for AI data centers. XPO keeps the existing pluggable approach, but scales it for higher bandwidth and better thermal management. The XPO multi-source agreement (MSA) now has >100 participating companies, including Marvell (playing both CPO and pluggable sides), Lumentum, Coherent, and Eoptolink - a sign that the industry is serious about this.

In this article, we’ll cover three things:

A primer on the XPO format and how it solves the faceplate bottleneck

The three-way trade-off between speed, power, and modularity that decides CPO versus XPO at each generation

Why CPO and XPO formats will coexist in scale-out at 1.6T but diverge as lane speeds climb to 3.2T and beyond.

We’ll conclude with a view on where each format lands across scale-up, scale-out, and scale-across, and why multiple strategies will likely coexist for years.

This post will have a lot of optical terminology. If you are unfamiliar with it, please see an earlier post below that explains most of what you need to know.

If you are not a paid subscriber, you can purchase just this article using the button below. You can find the whole catalog of articles for purchase at this link.

How XPO Solves the Faceplate Bottleneck

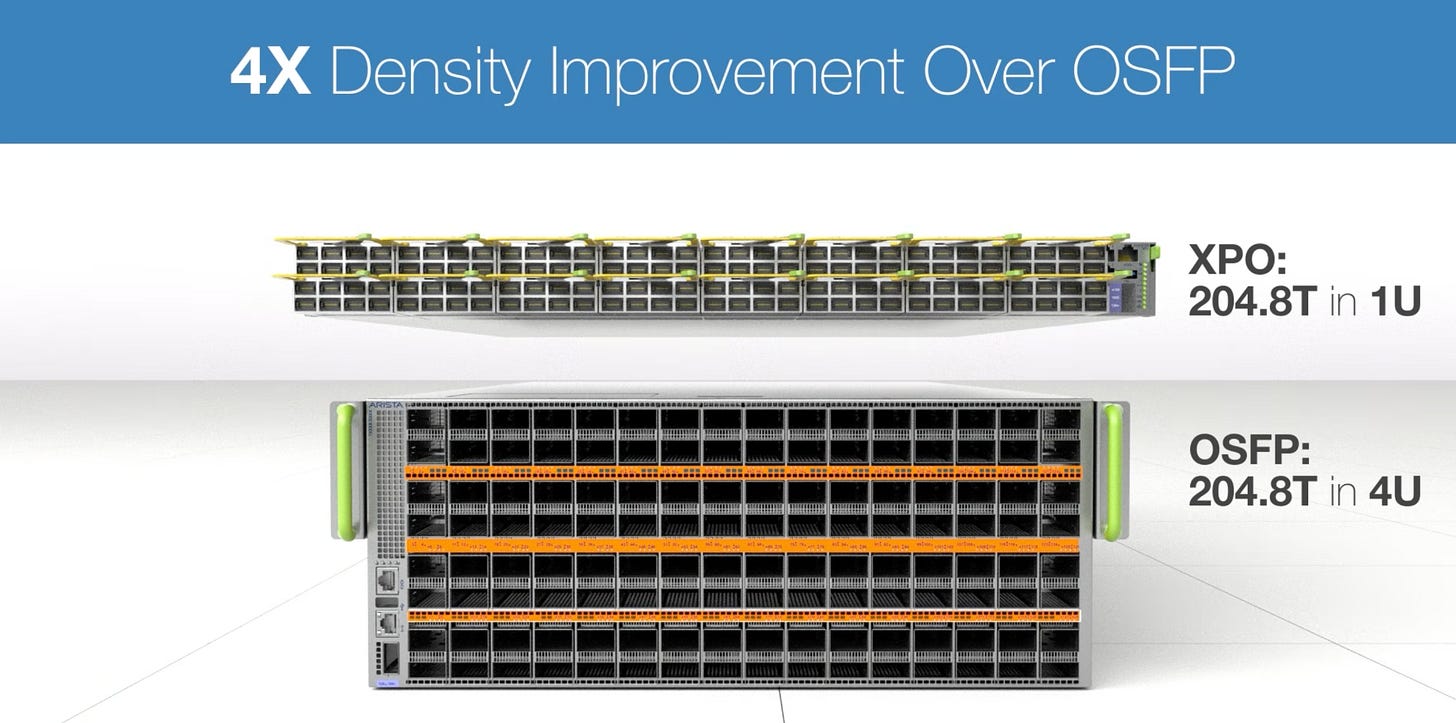

As switch ASICs scale toward 204.8T, the physical real estate of the switch faceplate becomes a severe bottleneck. The traditional pluggable model simply runs out of physical space. Consider the math for outfitting a 204.8T switch with standard 1.6T OSFP modules: a standard 1OU (Open Rack Unit) slot accommodates about 32 OSFP plugs, delivering 51.2T of bandwidth. Fully outfitting a 204.8T switch therefore requires 4OU of rack space purely for front-panel connections.

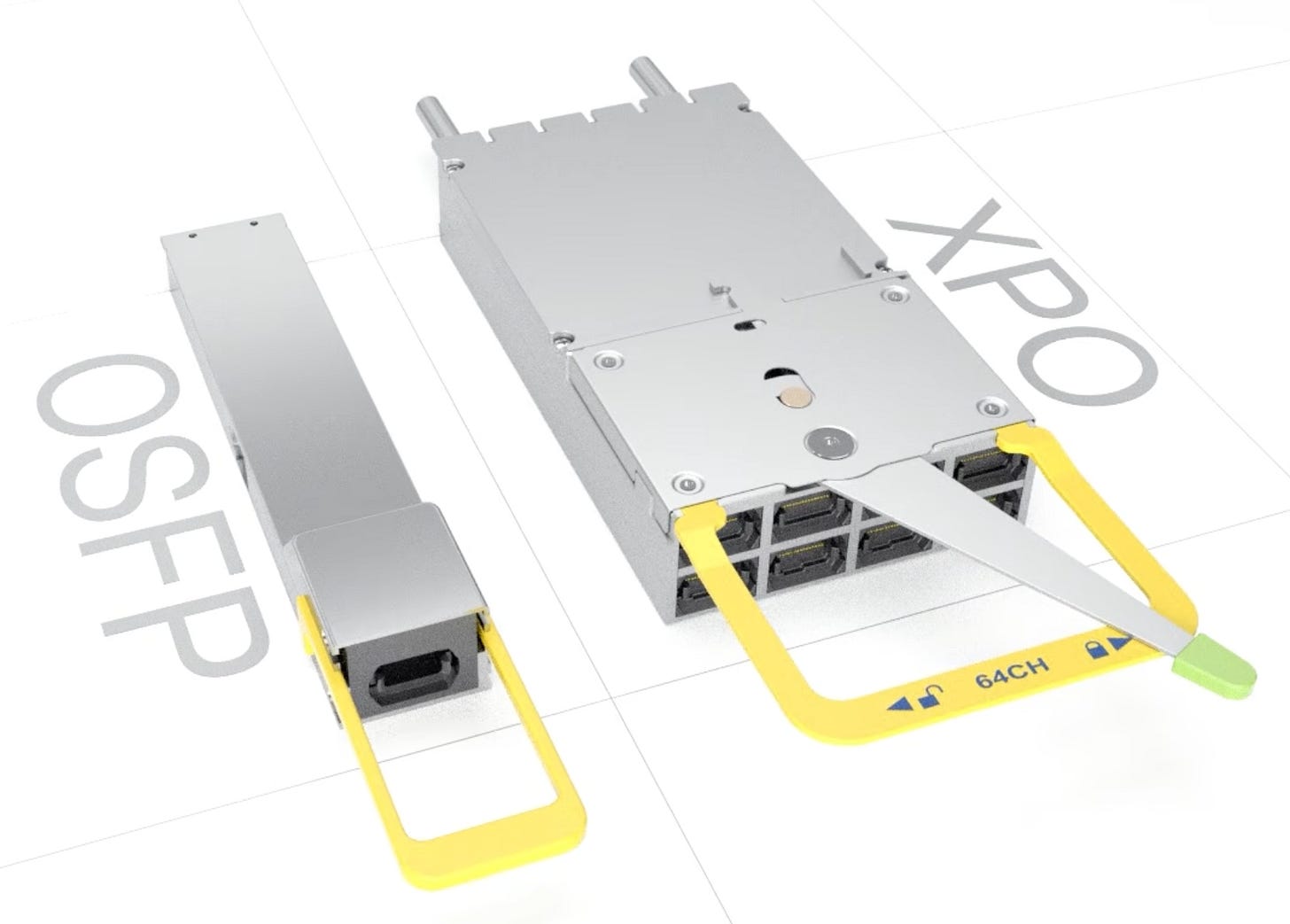

XPO alters this physical footprint entirely. An XPO module delivers 12.8T of bandwidth, or eight times the capacity of a 1.6T OSFP. While the XPO form factor is roughly 2.7x wider (allowing only 16 plugs per 1OU), those 16 plugs can deliver the full 204.8T in that single rack unit.

The core advantages of XPO:

4x Density Gain: It reclaims 3OU of highly valuable rack space per switch, reallocating it back to CPUs and GPUs at the datacenter level.

Reduced Component Failure Domain: Just 16 XPO plugs replace the DSP, transimpedance amplifier (TIA - that amplifies the detected signal), driver, and photodetector components that would otherwise be spread across 128 OSFP plugs.

Supply Chain Continuity: It retains the external, modular approach hyperscalers are accustomed to. MSAs ensure a diverse supply chain, and the format supports any optical interconnect reach (DR, LR, ZR, ZR+) and technology (IMDD (intensity modulation direct-detection), coherent, coherent-lite, micro-LEDs, and RF).

DSP Power and Cooling in XPO

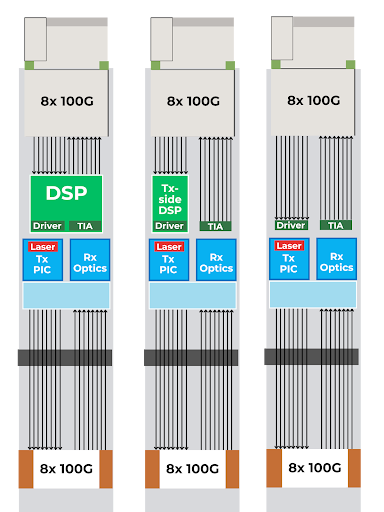

The primary downside to XPO’s pluggable format is dealing with signal degradation over copper interconnects between the faceplace and switch ASIC using power-hungry DSP chips. XPO offers three implementation paths to manage this, in decreasing levels of power consumption:

Full Retimed: Utilizes a DSP on both the transmit and receive sides (highest power).

Linear Receive Optics (LRO / Half-Retimed): Utilizes a DSP only on the transmit side, saving power while maintaining signal integrity.

Linear Pluggable Optics (LPO): Eliminates the DSP entirely, relying only on drivers and equalizers, leaving the heavy lifting to the switch ASIC (lowest power).

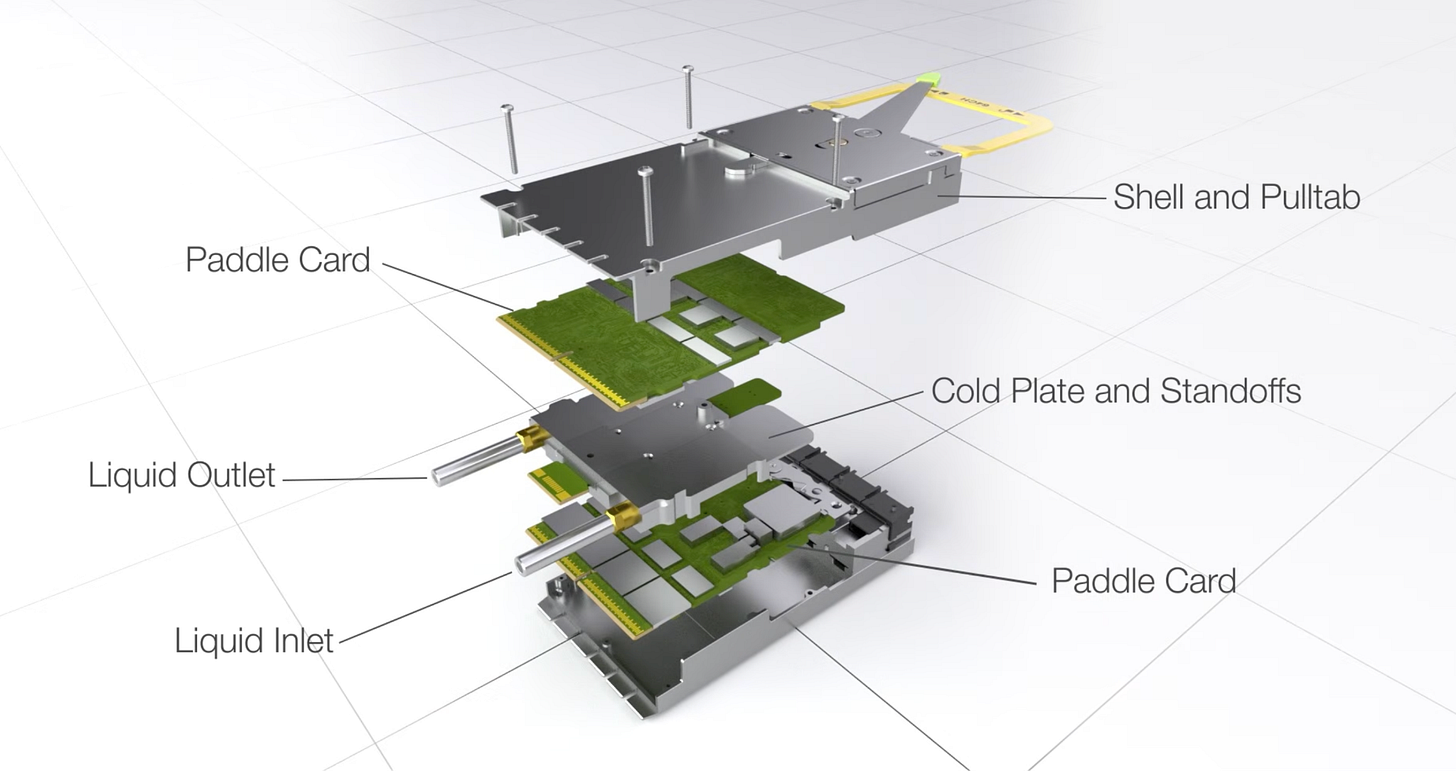

Regardless of the DSP architecture, XPO’s immense bandwidth density makes traditional air cooling impossible. Liquid cooling is essential. Each XPO plug features an integrated cold plate designed to dissipate roughly 400W. While some legacy solutions attempt to bolt cold plates onto the exterior of OSFP plugs, XPO’s natively integrated liquid cooling is vastly more efficient. This also implies that the racks in the datacenter using XPO need to have liquid-cooling available, which might be difficult in legacy infrastructure.

In comparison, CPO boasts the lowest power consumption per port across the board. Because optical-to-electrical conversion happens directly adjacent to the switch silicon, CPO bypasses the need for heavy external DSPs. How XPO ultimately stacks up against CPO economically depends heavily on which of the three XPO implementations - Full, LRO, or LPO - a data center adopts.

Approximate numbers; varies based on vendor and implementation.

That sets up the real question: which format actually wins, and where? The answer depends on how hyperscalers weigh three trade-offs that diverge as we push to higher lane speeds: speed, power, and modularity.

After the paywall:

A deep dive into the trilemma of speed, power, and modularity that dictates whether CPO or XPO wins in future networks.

A detailed breakdown of CPO’s superior energy efficiency, how serviceability concerns are solved, and its market impact on the pluggable optics ecosystem.

An analysis of the XPO-LPO and XPO-LRO “Goldilocks” approaches for 1.6T networking, and their limitations on lane speed and supply chain concentration.

A look at the divergence point: why XPO becomes power-prohibitive at 3.2T and beyond, making CPO the more attractive future-proof architecture.

The final verdict on where CPO makes the most sense (scale-out) and why XPO is the natural fit for scale-across networks.