🍪 TWiC: Intel with TeraFab+Sambanova+Google, Samsung and Anthropic 🚀, AGI CPU in China

This week in chips: Is Intel back?

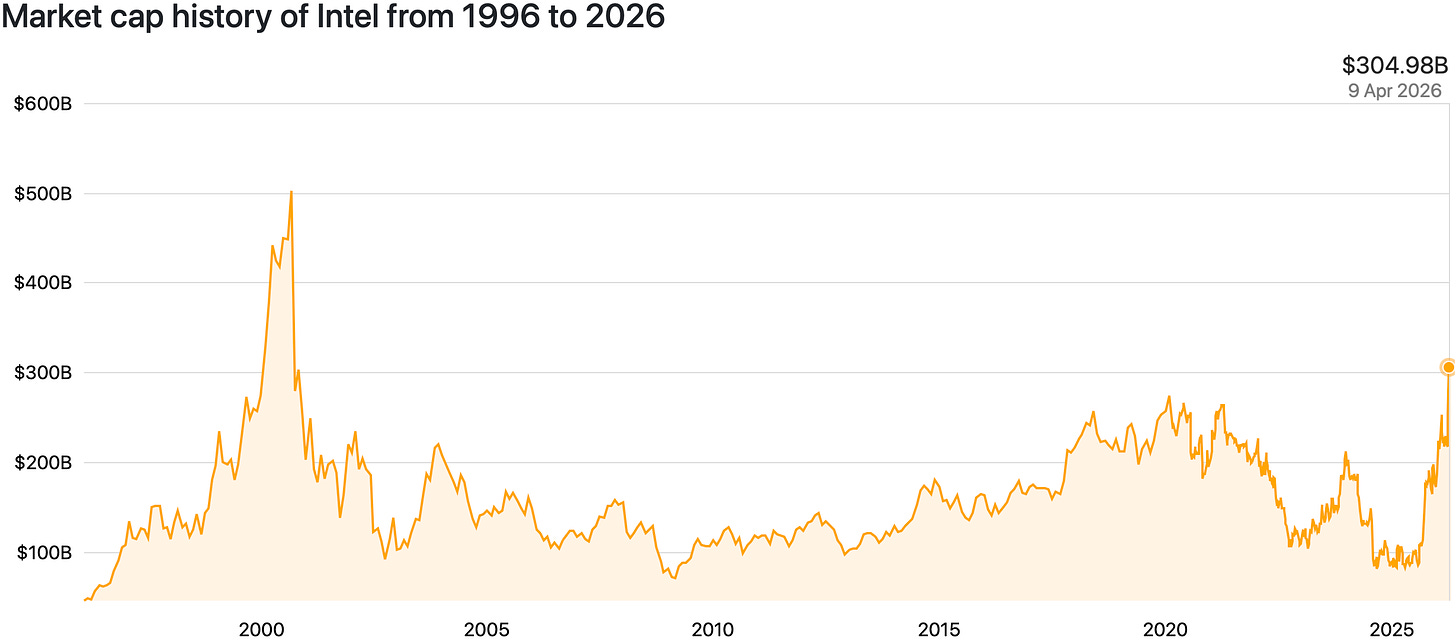

Intel’s market cap crosses $300B, up 3x in a mere 6-9 months. As TSMC CoWoS still remains constrained, Intel’s EMIB is picking up steam through 2026, and positive Intel vibes around news cycles have led us to ask - “Is Intel back?” In other news, Anthropic annualized revenue rates are through the roof, Samsung profits are insane, and Arm finds a China loophole.

We’ve covered a lot this week, and in case you haven’t had a chance to catch up.

MicroLEDs vs Lasers: The Linewidth Tradeoff — why linewidth matters and how LEDs and lasers differ (no paywall this week, enjoy!).

Busy week on the Semi Doped podcast (which crossed 10K downloads!):

Alright, let’s dive into the news.

Intel Joins Musk’s TeraFab Project

Intel announced it will join Elon Musk's TeraFab project alongside SpaceX, xAI, and Tesla. The Terafab project aims to produce 1TW of compute capacity annually and Intel would bring its expertise in advanced nodes, packaging, and silicon manufacturing at scale.

If Musk actually delivers on even 10% of TeraFab's ambition, Intel secures a whale customer, a strategic win, and a major turnaround. For the Terafab project, Intel being involved actually brings in a practical level of feasibility than Tesla/SpaceX/xAI trying to build a leading-edge fab from scratch.

This is a win-win for both parties involved but will have material impact only when we see significant wafer starts per month from Intel towards the Terafab project.

Heterogenous systems for Agentic AI: Intel + Sambanova

On other Intel news, from the press release,

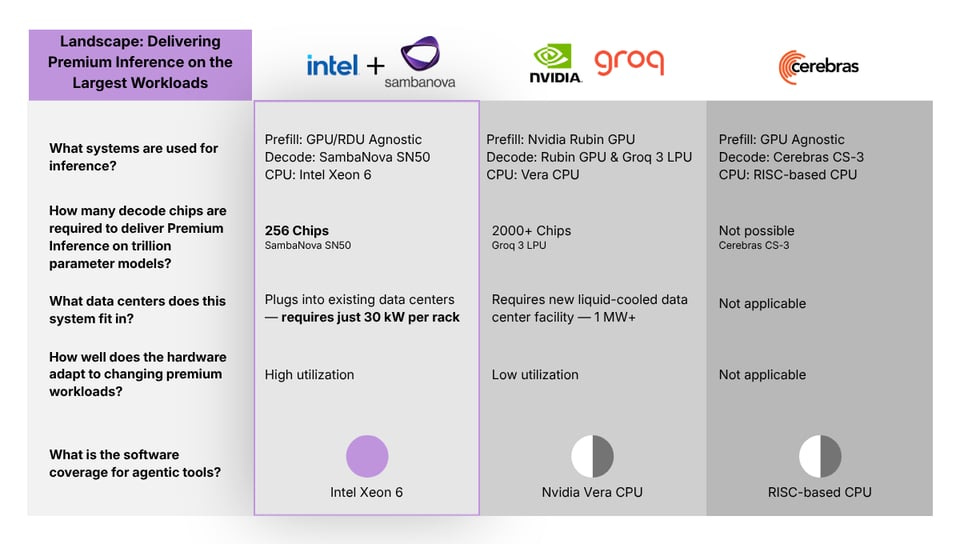

SambaNova today announced the next phase of its collaboration with Intel: a heterogeneous hardware solution that combines GPUs for prefill, Intel® Xeon® 6 processors as both host and “action” CPUs, and SambaNova RDUs for decode to deliver premium inference for the most demanding Agentic AI applications.

Just like we saw Nvidia pair Rubin GPUs with Groq LPUs and Vera CPUs, Intel and Sambanova are collaborating to provide specialized decode hardware and CPUs. Their comparison table below is interesting and points out something important: the number of decode chips required.

One of the major limitations of LPUs is its limited SRAM and the need to deploy thousands of chips for large models. The complex compiler architecture of Groq chips means its not easy to add HBM/DRAM to expand capacity at the expense of speed. This inelasticity is why I have believed that Groq LPU was never a good fit long term, regardless of how much Nvidia paid for them.

Sambanova’s SN50 RDU architecture uses SRAM, HBM and DRAM, but their secret sauce is to “predetermine” where each piece of the information is going to come from in advance. The RDU stands for Reconfigurable Data Unit, and it establishes a data path between different heterogeneous storage media to access data. This makes it vastly more flexible than Groq’s static approach. MatX also uses a hybrid SRAM+HBM system that is super interesting (check out Austin’s interview with the CEO linked above). We discussed all this in a previous TWiC edition.

This is just the first of many such heterogeneous solutions that will emerge. The effectiveness of each solution will depend on how data movement is architected between various pieces of disparate hardware. Exciting times.

(via Sambanova)

Intel + Google partner on Xeon CPUs and IPUs

In even more Intel news, Google and Intel partner to deploy Xeon CPUs and Infrastructure Processing Units (IPUs) for AI workloads. IPUs handle networking, storage, and security, much like Bluefield Data Processing Units (DPUs) from Nvidia. The main idea is to offload some admin functions from the main CPU so that it can focus on more critical AI related orchestration tasks.

In a way, this shows that not all CPUs are headed the ARM way, and x86 retains its stronghold in AI datacenter deployments. Google’s own ARM-based Axion CPUs were deployed for cloud infra and were focused on energy efficiency. In the AI era, raw power still matters and x86 Xeons still crush. If you want a head-to-head comparison CPUs in the market, check out our CPU yellow pages.

(via Intel)

Samsung Projects Record Q1 Profit, Driven by Memory

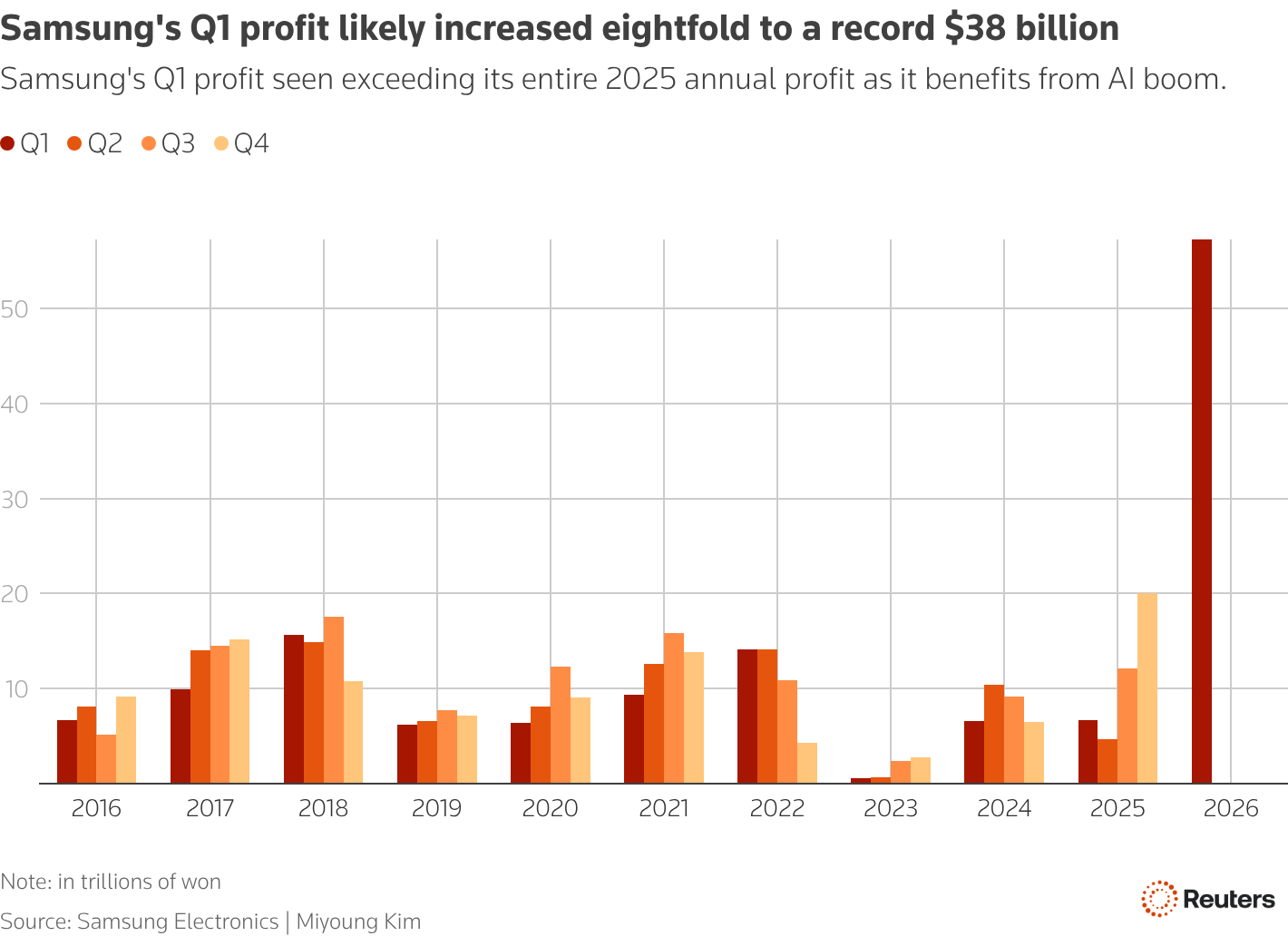

Samsung is projecting a blowout quarter with a 755% YoY increase in profits of 57T won ($38B) compared to 6.7T won ($5B) in Q1 2025. 95% of the profits are from the memory sector, while the rest is from other sectors like mobile which are flat or declining. Revenue is up 68% YoY. It is anticipated that other major players like SK Hynix and Micron will post spectacular results in the coming quarter too.

We are now deep in the memory supercycle since we first spoke about it on this newsletter six months ago.

In this week’s Semi Doped podcast, we discussed how spot DRAM prices are so high due to AI sucking up all the supply that consumer devices are left scrambling to get what silicon is left over. While this has led to memory price crashes in the past, we argued in an article to paid subscribers how this time is different because of a structural demand enforced by AI, regardless of algorithmic improvements such as TurboQuant.

Samsung’s projected numbers in Q1 2026 are validation of growing memory demand and DRAM/NAND price hikes that are still rising in the coming quarters. But notably, a large portion of the ~8x profit increase is coming from the DRAM market, and not necessarily HBM. This is worrisome because while HBM provides a structural floor, spot prices are far more volatile and things often turn around quickly.

Anthropic Hits $30B Revenue Run Rate, Overtakes OpenAI

Anthropic revealed a $30B annualized revenue run rate, triple the ~$9B at end-2025 and up from ~$19B in March. They simultaneously announced a deal for 3.5GW of Google TPUs (via Broadcom) starting 2027, adding to ~1GW already secured.

30x ARR growth in 16 months makes Anthropic the fastest-scaling enterprise company in history. But the infrastructure story is equally significant: they're hedging across AWS Trainium, Google TPUs, and NVIDIA GPUs — a diversified compute strategy. This is a big plus for Broadcom which now has a >$100B AI pipeline (possible $200B?). In a weird turn of events, Anthropic is rumored to start developing their own chips. What else are they gonna do with the extra change when their ARR hits $100B next month? 🤡 (or maybe not?)

Arm’s Strategy of Selling AGI CPUs to China

This bit of news is slightly over a week old, but I feel it flew under the radar. Arm’s CEO Rene Haas said in an interview to the ChinaDaily that Arm intends to sell its finished AGI CPU to Chinese customers. US/UK export controls restrict Arm from licensing its Neoverse V3 cores, but says nothing about selling finished chips instead of just IP. This is a key distinction: licensing IP would let Chinese firms design and produce their own chips indefinitely; buying finished CPUs from Arm does not transfer that design capability.

Selling CPUs in China unlocks a huge market that previously did not exist for Arm. The AGI CPU gives Chinese buyers immediate access to a modern, high-core-count, AI-optimized Arm server processor for orchestrating large AI/agentic workloads. The key announcement to now track is Arm’s first Chinese CPU customer, whoever that is.

(via ChinaDaily, DigiTimes)

Have a great weekend!

Regarding that appetite for ever-larger caches, Intel's "smart" base tile tech might be of interest. In Clearwater Forest, the base tile (manufactured in " Intel 3") is not just a convenient substrate to mount the CPU tiles on, it also provides the I/O and cache memory.

This type of advanced packaging, made possible with EMIB and Foveros could well be

Intel's key value proposition going forward. In several ways, Panther Lake was also important as proof of concept that Intel can mount and interconnect of different types of chips (tiles) from different manufacturers and nodes.

How much of Anthropic's revenue so you think is coming from companies like Meta splurging on tokens, in internal competition for who can use the most tokens as we've seem some news come out?