🍪 TWiC: AMD+Meta, New AI Chips, Citrini

This Week in Chips: Key developments across the semiconductor and adjacent universes.

In a way, TGIF because I’ve had a lot of changes to deal with this week. I’ll post an update about it at some point.

When combined with a short family getaway last weekend, I’m running behind on the deep-dive for next week. I’m planning to revisit Lumentum’s tech in a bit more depth to understand their laser dominance, where their moat really likes, and for how long. I’ll let you know what I find.

As Feb draws to a close, here are some articles you might have missed this month. The CPU posts were very popular; I recommend checking them out if you haven’t.

Links to previous 🍪 TWiC posts this month: Feb 6, Feb 13, Feb 20.

Semi Doped Podcast with Austin Lyons had some high engagement episodes this month. Lots of positive feedback from the interwebs; available on YouTube and all podcast platforms.

The future of financing AI infrastructure with Wayne Nelms, CTO Ornn

Optical networking supercycle - ALL the tech you NEED to know

I had a lot of plans to do scale-across, physical AI, and other stuff in Feb, but the industry moves to fast for me to actually plan ahead. I’ll just continue winging it.

Anyway, apart from NVIDIA’s earnings call this week (spoiler: they made a lot of money), there are some other interesting things to get to.

AMD—Meta to Deploy 6GW of AI Infrastructure

The latest infrastructure deal between AMD and Meta is an exact replica of their deal with OpenAI last year - similar GPU commitments (6GW), warrant shares (10%), etc. These deals make it possible for AMD to make a land grab in the GPU space where NVIDIA holds 90%+ market share, and rapidly rise overall share in GPU infrastructure. The 10% shareholder dilution from each of these deals isn’t really a big deal considering that the last tranche will only fully vest when 6GW is purchased and AMD share prices hit $600.

An important takeaway from the recent CPU posts (linked above) on this newsletter is that the AMD Venice with its 256c/512t core count, high per-core IPC, and x86 arch makes it the Swiss army knife for reasoning or action-oriented agentic AI. NVIDIA Vera’s low core count and ARM-based architecture, and Intel Diamond Rapids’ lack of SMT puts AMD as the prime CPU candidate (BTH makes a strong case too). The upcoming CPU explosion combined with these massive deals puts AMD in a perfect position to grab a larger market share from NVIDIA.

It comes down to whether AMD’s MI450 platform is ready for first token in 2026 as AMD says, or if it will be delayed to 2027 like SemiAnalysis says.

Chips for High Speed Inference

Three inference chip startups dropped announcements in the same week, raising a combined $1B+ in venture funding: Taalas, MatX, and SambaNova. Each one is betting on an alternative architecture to Nvidia’s GPU, but differ significantly on what the right approach looks like.

Taalas: Burn the Model into the Chip

Taalas came out of stealth with the most extreme approach of the three. They hardcode a model’s weights directly into the chip. They’re secretive about how exactly it is stored, only stating:

We basically have an architecture where we are embedding the models, and we are hard coding the models and the weights into our what we call the mask ROM recall fabric, which is paired with an SRAM recall fabric. Together, they are able to store both the model as well as do all the computations of KV cache.

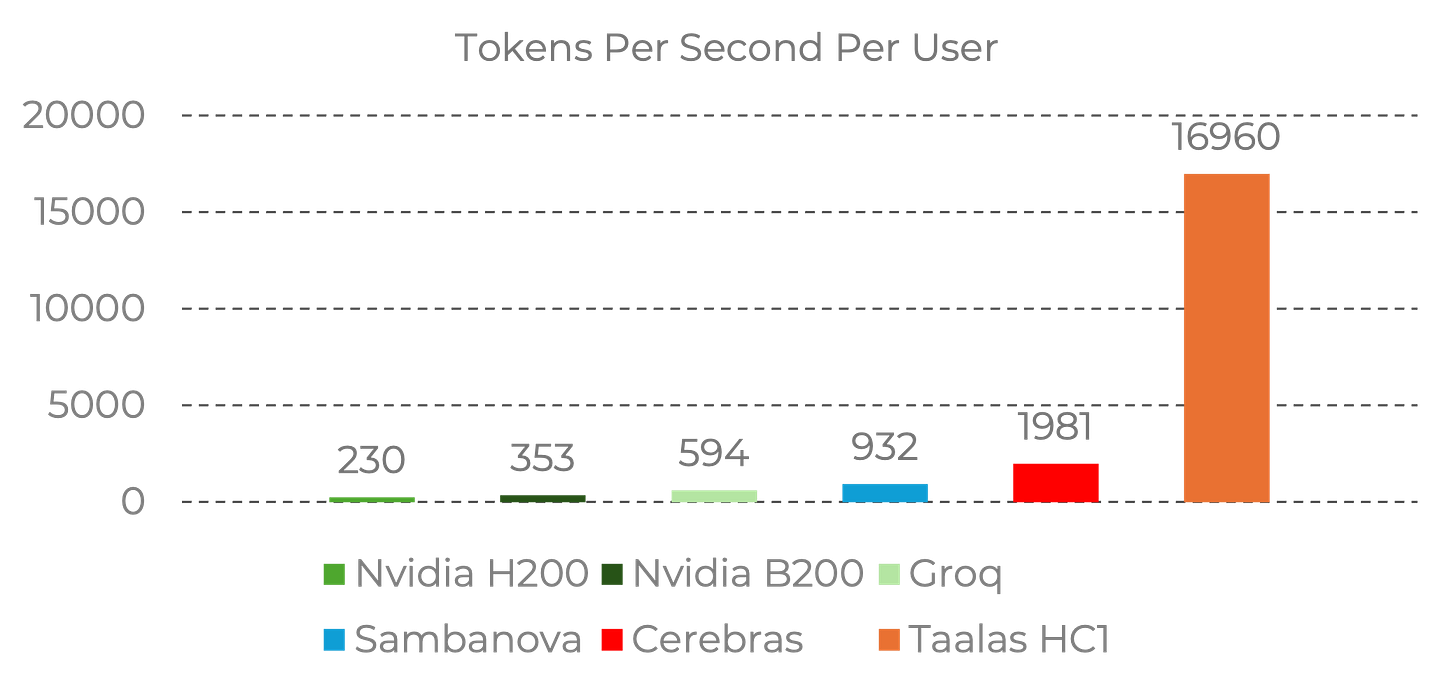

Their first chip named HardCore (HC1) is built on TSMC N6 and draws around 200 watts per card. They demoed it running Llama 3.1 8B at 17,000 tokens per second. HC2, targeting up to 20B parameters per chip, is expected in summer 2026.

The obvious tradeoff is that each chip is locked to one model. Taalas says swapping to a new model only requires changing two metal layers, not a full redesign, and they claim a two-month turnaround from frozen weights to deployable PCIe cards.

You can try inferencing on this hardware on their demo platform. I tried it, and it was incredibly fast. The answer appeared nearly instantly but was extraordinarily wrong. I do understand that this piece of news might still not be in the training data, but a little web search functionality in this day and age of AI won’t hurt to bring some confidence to people trying this tech out. It even got their own company wrong!

It remains to be seen if customers will commit to “static” infrastructure deployments without the option to upgrade. As model releases have yet to reach a steady state and the use cases are still evolving, it is hard to see big commitments to deploy “hardened” LLMs at scale. It could be useful for low cost, smaller scale deployments where a current model is sufficiently accurate to provide inference needs for 3-5 years before inference chips need to be replaced with updated hardware.

MatX: SRAM? HBM? No, Both.

Typically, the choice between HBM-based accelerators (NVIDIA, Google) and SRAM-based accelerators (Groq, Cerebras) is an either/or situation which we have discussed in quite some depth. There is always a latency versus throughput tradeoff that has to be contended with; SRAM-based approaches give low latency but cannot support large models, while HBM-based approaches support large datasets, but have higher latency.

MatX’s approach is to use both to bridge the gap between latency and throughput. In an interview, CEO Reiner Pope explains how MatX broke up a large systolic array while preserving that energy and area efficiency systolic arrays are known for, and designed a freshly minted 4-bit variable precision numeric format to deliver the best AI chips at scale, even if it comes at the cost of smaller scale deployments and complexity. From Pope on X:

Mike Gunter and I started MatX because we felt that the best chip for LLMs should be designed from first principles with a deep understanding of what LLMs need and how they will evolve. We are willing to give up on small-model performance, low-volume workloads, and even ease of programming to deliver on such a chip.

They claim higher throughput on LLMs than any announced system while matching the latency of SRAM-first designs, with over 2,000 tok/s on large MoE models. And unlike Taalas and SambaNova, MatX says their chip handles training, RL, and inference — not just decode. That’s a much broader TAM play if they can pull it off.

The $500M Series B was led by Jane Street and Situational Awareness, with Marvell Technology and the Stripe co-founders participating. Tape-out is planned within the year through TSMC, with shipments targeted for 2027.

SambaNova SN50: The Incumbent Alternative

SambaNova is the most mature of the three. They’ve been shipping hardware since 2021 and are now on their third generation with the SN50 RDU (Reconfigurable Dataflow Unit).

RDUs are a clever invention because they serve to minimize the number of off-chip data movements by having the compiler physically map all the required operations into different parts of the chip beforehand. They use a combination of SRAM, HBM and TBs of DRAM integrated into the accelerator card, so that any PCIe interfaces are avoided (d-Matrix puts DRAM on the card too, along with SRAM on chip). The compiler essentially decides what goes where, and when movements should happen. This approach allows it to host models up to 10T+ parameters and support context lengths up to 10M tokens.

SambaNova is leaning hard into agentic workloads, and the three-tier memory is the reason they can. Multiple models can sit in DRAM and HBM simultaneously. When an agent needs to switch from a reasoning model to a code model to a tool-use model mid-chain, the RDU hot-swaps the active model into SRAM in milliseconds. They call this “agentic caching” — inference contexts for multiple models persist in memory so you don’t pay the reload cost every time you switch.

They raised $350M+ alongside a multi-year strategic collaboration with Intel to deliver inference solutions. SoftBank is the first SN50 customer, deploying it in next-gen Japanese data centers. Shipments start H2 2026.

The Citrini Selloff

A post by Citrini Research caused big drops in the market because it described a futuristic scenario in 2028 where pervasive agentic AI has taken over most of the software industry, displacing white collar jobs, and massively disrupting the economy. The piece is purely fictional, but the market response was not.

Perhaps the most criticized part of the piece was the AI-led destruction of the moats held by companies such as DoorDash and Uber Eats. The piece envisioned a marketplace of vibe-coded alternatives to food delivery companies which will destroy the “habitual intermediation” moat held by the incumbents.

Ben Thompson pushes back hard on this argument in a Stratechery Plus article stating that DoorDash has an incredibly powerful network effect that strongly entrenches three parties: the driver network, the restaurant network, and the customer network. The ability to provide value to the customer (more selection), restaurants (more volume), and drivers (more pay) makes it an incredibly hard business model to displace with merely vibe-coded alternatives.

A nice piece by Citadel Securities takes a much more data-driven, level-headed rebuttal to the original article. They argue that St Louis Fed data does not show that AI is actually displacing jobs, but could instead complement them — just like the introduction of Microsoft Excel did not destroy desk work jobs, but instead morphed them into a different function.

AI is also constrained by physics and finance: energy, raw materials, and manufacturing capacity could act as a natural limiter of exponentials, while if the marginal cost of compute rises above the marginal cost of human labor, then AI substitution instantly fails in an economic sense.

Everything is still speculation and the discussion on the future of AI is fantastic, but history’s lessons tells us that when productivity jumps occur, humans only work more, not less.

Would love it if you could answer this simple question. 🙏🏽

I think you could experiment with the format of this column a bit. Perhaps more links and shorter commentaries would make sense, similar to Construction Physics' weekly "Reading List" posts (I see you are also a subscriber of that blog).

Question: is the SemiDoped pod available on Apple Podcasts and other platforms or only YT?